What’s new?

The magazine Additive Manufacturing reported on 14 August 2020 on the use of graphene in 3D printing, also called additive manufacturing. Additive manufacturing uses filaments of plastic sometimes with embedded with other materials, often fibers, to increase the strength or improve other properties of the printed object. In this case, the ability of graphene to carry heat means that the resulting object cools more uniformly, reducing the tendency of layers to separate after printing.

On 24 August, the company Paragraf reported in the online journal EE Times, that Paragraf ”has developed an innovative method of producing graphene at scale.”

What does it mean?

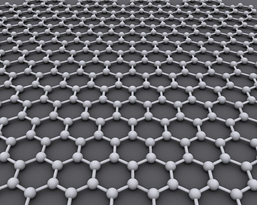

Graphene is an arrangement of carbon atoms in connected six sided shapes, as shown in the picture above. The arrangement is only one carbon thick, making graphene a two-dimensional sheet of material. In 2004, two researchers at the University of Manchester used sticky tape to peel off layers of carbon from graphite to create graphene. They shared the Nobel prize in physics in 2010 for their research on the material.

Graphene has useful properties. For example, it is very conductive, that is, electrons flow easily through graphene. This property is important in Hall effect sensors, which use the interaction of magnetism and electricity to detect position, for example, to detect the level of a floating magnet in a gas tank and thus the amount of gas in a car. Graphene is also extremely strong for its weight, it is transparent and flexible, and, as mentioned above, it conducts heat well.

Research on new materials holds great promise, usually research on composites of different elements. Graphene, carbon nanototubes, and buckminsterfullerenes (also called fullerenes or buckyballs, but not these dangerous small round magnets) are fascinating to me because they are just carbon, which is also the building block of all life on planet Earth (and, memorably, the signature of infestation in the 1979 movie Star Trek: The Motion Picture). Do an internet search on “my favorite element is carbon” and you will be rewarded with fascinating information about the element with atomic number 6. Not to mention carbon’s use in that old, old communication device, the pencil.

I should have known better, but I thought we would be farther along by now in practical applications of graphene. Graphene was hailed as a miraculous substance with great promise, with the 2010 Nobel Prize citation predicting that “a vast variety of practical applications now appear possible including the creation of new materials and the manufacture of innovative electronics.” A 2012 article in New Scientist was more cautiously optimistic: “Hundreds of applications have been suggested to take advantage of graphene’s remarkable properties. Some are more realistic than others, and difficulties remain to be overcome with all – but from computer chips to touchscreens, there are some promising ideas in the pipeline.”

Progress has been slow. However, even recognizing that graphene had been noticed before 2004 (the term graphene dates from 1961), the time scale of discovery, research, and application is typically very long. My favorite example is the fax, which was invented in 1843.

The Paragraf article that I cited at the start of this post discussed one always present barrier, difficulties in manufacturing: “These challenges mean there has been a lack of contamination-free, transfer-free, large-area graphene available in the market, and adoption in mass-market electronics remains slow. New solutions are clearly required if graphene is to make its mark in the electronics sector.” You cannot study a substance and, even more, you cannot apply it widely until the substance can be manufactured at a reasonable cost with high quality.

What does it mean for you?

Progress in technology is often slower than we want but also sometimes slower than we perceive it to be – many an “overnight sensation” in any field is actually the result of years of hard work. Graphene is fitting into that usual story very well. Progress is happening, with Patentscope showing 64,126 worldwide patents containing the word graphene at the time I am writing this sentence. But the University of Manchester, the birthplace of graphene, even says: “There are many thousands of patents relating to graphene. Many of these are unlikely to become reality.”

One key is improvements in manufacturing; another is that graphene may be useful when added to other substances (as in the additive manufacturing article cited at the start of this post) or when traces of other substances are added to it. On 29 January 2020 New Scientist reported that the addition of guano, yes, guano, improves some properties of graphene. The New Scientist delights in the title of the paper it cites as the source of this information: “Will any crap we put into graphene increase its electrocatalytic effect?” Thus it seems that, again, alloys or composites of different materials are in our future. A great deal of our technological past can also be interpreted as learning about the importance of mixing substances, as in the development of steel from iron, which depended crucially on the percent of carbon in the recipe, and learning about the importance of specific manufacturing methods, such as temperatures and methods for heating and quenching steel.

A 2019 article in Digitaltrends lists potential applications of graphene in flexible electronics, solar cells/photovoltaics, semiconductors, water filtration, and mosquito defense, a list that that make graphene seem poised to solve several of the problems facing our world goals of sustainability. As with any new substance, we need to worry about potential safety hazards, and the answer to the question “is graphene safe?” seems to be that we don’t know yet if it is safe in all formulations and uses.

You are likely to see new products that incorporate graphene, such as in the Hall effect sensors I described above, indeed, you may have already, but you are also likely to be unaware of the presence of graphene in those products. The history of technology is the discovery of new materials and then the painstaking and slow development of applications. I am eager to see what the future holds for graphene. Also, with graphite, diamonds, fullerenes, carbon nanotubes, and now graphene, I am eager to see what other tricks carbon has up its sleeve.

Where can you learn more?

Graphenea has a good technical description of the properties of graphene.

Wikipedia has a good list of potential applications of graphene.

Many state fairs are cancelled or scaled back this year. The Colorado State Fair has its eye on the important stuff with the Drive Through Fair Food event.